A Robot Just Drove Across Mars Without Human Control For Two Days.

Here's what that actually means and why it changes more than space exploration.

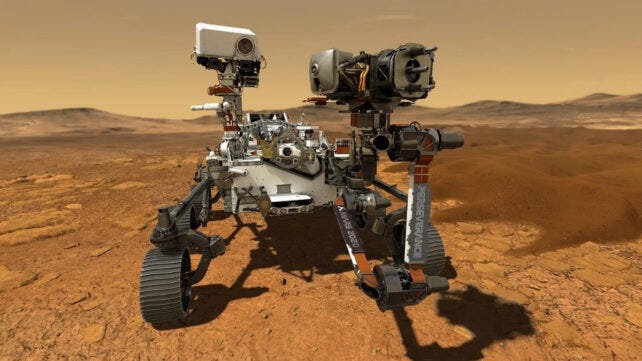

Last month, NASA’s Perseverance rover drove across Mars.

That by itself isn’t news. It’s done that hundreds of times.

What changed was this: no human planned the route.

Instead of a team on Earth studying images, debating risks, and mapping out every move, an AI system did it.

It analyzed the terrain, looked for hazards, chose the safest path, and told the rover where to go.

The same photos and data that normally go to human planners were handed entirely to the machine.

It studied the landscape.

It decided.

It drove.

For the first time in history, a machine made its own navigation decisions on another planet.

Pause on that.

This matters not just because it happened on Mars, but because of what it shows about where AI really stands today.

This wasn’t a lab test. It wasn’t a simulation.

It happened 140 million miles away, in an environment where a wrong move could damage a $2.7 billion rover beyond repair.

And NASA was comfortable letting the AI take that risk.

Here’s how it works in simple terms.

The AI was trained on thousands of examples of terrain.

It learned what safe ground looks like. It learned what dangerous patterns look like, loose soil, sharp slopes, rocks that could trap a wheel.

When it receives new images, it doesn’t “think” about them the way a human would.

It doesn’t imagine Mars. It doesn’t reason about exploration.

It compares what it sees to everything it has learned before.

Very quickly.

Then it calculates the path that has the highest chance of being safe.

It doesn’t know it’s on Mars.

It knows what safe terrain looks like.

That difference is important.

AI is extremely powerful at recognizing patterns quickly and consistently, especially in complex environments.

But it depends on patterns it has already seen during training.

When something completely new appears, like something outside those learned patterns, it may hallucinate, that’s where human judgment still matters most.

Mars just became a real-world test of that balance.

The lesson is not that machines are taking over space exploration.

It’s that, in the right conditions, AI decision-making is mature enough to operate in places humans physically can’t.

It can act independently, process risk instantly, and make choices where communication delays make human control slow and impractical.

A rover driving itself across another planet may sound like science fiction.

But it’s really a sign of something more grounded: AI is no longer just assisting human decisions in controlled settings.

In certain environments, it’s already making them.